Taking vision as an example, but that can also be applied to any other data gathering devices, the main problem lies in trying to deal with variability and complexity of inputs. The system states are way beyond any a priori memorization and classification, except if the input system parcels the input patterns into small enough regions with limited number of input states or patterns, and allows each region to classify and recombine its state with the surrounding states independently.

In EYEYE system's Brain Module live data is obtained in parallel fashion by many independent sensory cells. Each cell accepts a limited number of primitives that cover all the possibilities of its phase space, and uses simple software to analyze and output results to higher-level sensory cell. The same amount of live data in a sequential system would take much longer to process, producing latency that would not permit constant flow of live data. Information flow and verification occurs between levels similar to asynchronous Hopefield nets, and is housed in a novel parallel architecture of a cellular type. The architecture is expandable and mathematically complete and consistent.

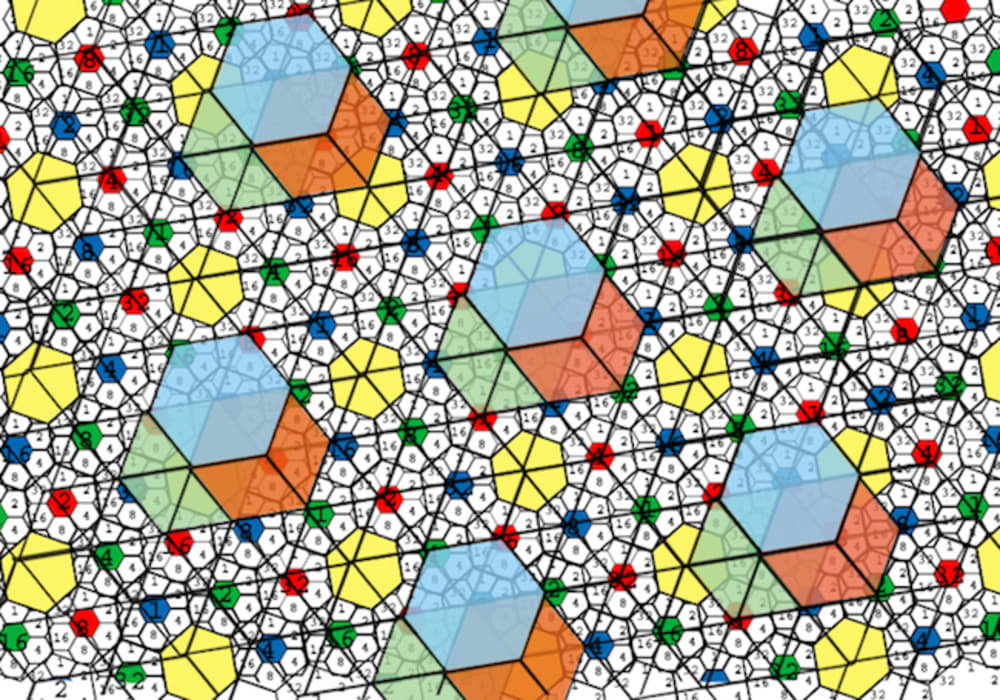

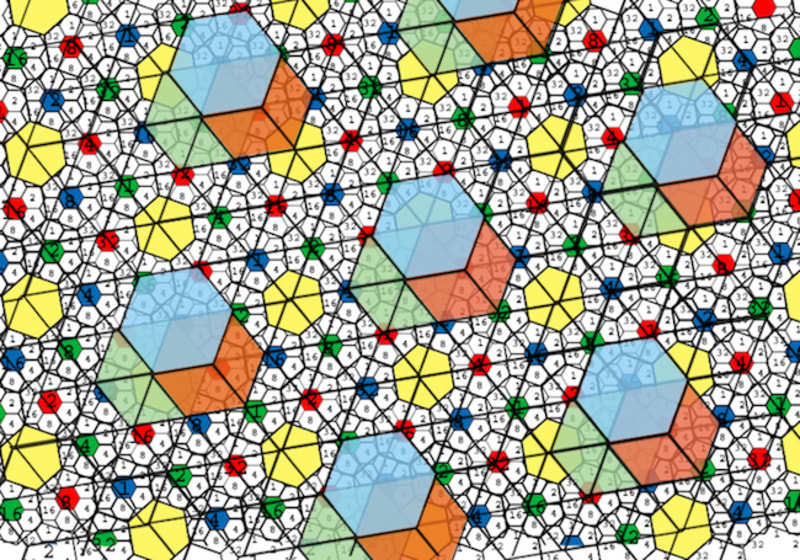

Letters in a phonetic language represent a unit sound, and then they can be strung together to form innumerable words. Having 64 basic patterns, where each pattern represents a “pictorial sound” in a given region of space, makes it possible to compose any 2D shape (“pictorial word”) with them. The exact position in 2D space of that word does not need to be remembered, because the architecture already places it in a specific region. The Cambridge scrambled text example shows that “reading” is possible as long as all the sounds (letters) are included, and the edge sounds (first and last letter) are in their place.

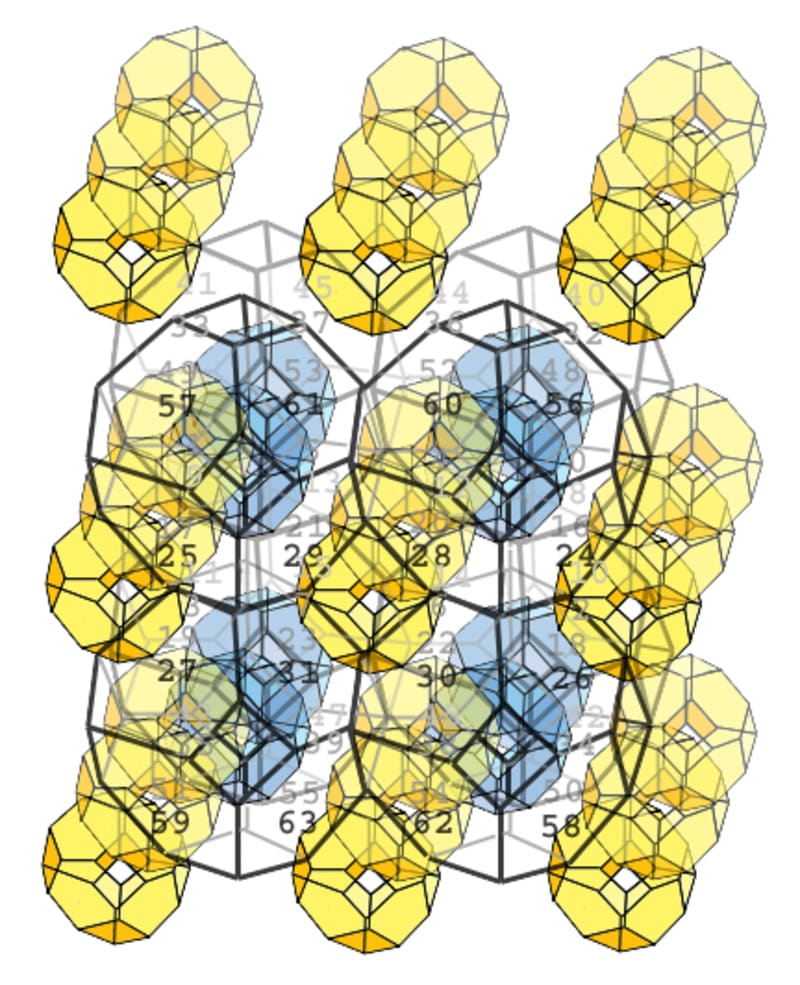

In EYEYE system the “pictorial word” is memorized in a 3D symbolic space network composed of hash units that contain similar “pictorial sounds”, so that variation would not affect recognition. The input pattern is transformed to a 2D output pattern that can be used by other modules as input, and can be received from other modules as a "focus". Memory at node level permits recreation of the memorized 3D symbolic network, without imposing the limit to the number of "pictorial words" that could be memorized, and all in a strictly defined amount of memory.

In EYEYE system it becomes possible to make synesthetic comparisons, concatenations and analogies between different types of patterned inputs, without needing to switch to another type of software, therefore specifying a given 3D network that represents more than one input. Eventually such a system could form ideas, and a more agile type of AI, capable of dealing with IoT.

Like this entry?

-

About the Entrant

- Name:Neven Dragojlovic

- Type of entry:individual

- Software used for this entry:Hand verification

- Patent status:patented