Pen technology has matured enough to date with more improved ink-delivery systems to write more comfortably. Yet, no pen on earth can capture the handwritten characters on paper and convert them to digital format. One commercial product named LiveScribe is available on the market which could capture drawing or writing on 'special papers' to upload the sketch as it is on the cloud. But it lacks conversion of the text in digital format and generalized paper writing support.

'DigiPen' solves the problem efficiently and elegantly. It brings a new concept of smart input device without replacing traditional paper writing. This is made possible with the recent exponential development of the Machine Learning technique called 'Deep Learning'.

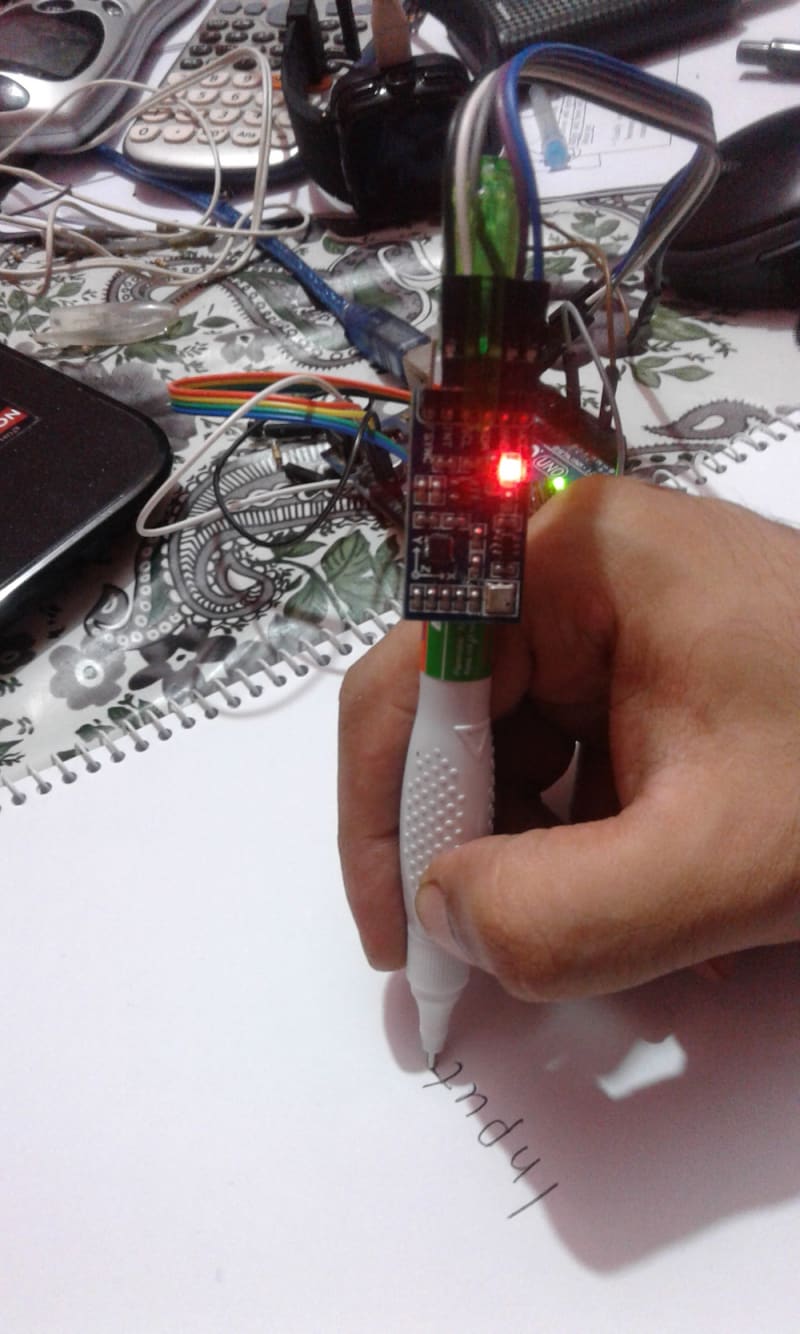

A 'DigiPen' is also a traditional pen that writes on any paper surface with one significant improvement -- it's electronic and more importantly, it's smart!! We have two types of 'DigiPen' concepts-

1. 'DigiPen Basic' which works with companion app/software for deep learning with bluetooth communication

2. 'DigiPen Live,' a standalone Wi-Fi enabled system that works without companion app/software and records handwritten data on the cloud/onboard memory in digital text format.

Hardware construction:

At first glance, the 'DigiPen' looks like regular pens which could be used to write on paper surfaces. But at deeper look, it could be distinguished as an electronic gadget that does a lot more than writing. Improved liquid ink is stored on replaceable ink tube cartridge with removable ballpoint/pinpoint nib. Besides delivering ink, the nib of the pen also acts as a tactile switch to initiate writing capture.

The electronics unit rests on the tail section that contains an on-board microcontroller with 192 node neural network processing engine, a 10DOF IMU sensor module and a bluetooth/wifi communication module. A tiny lithium-polymer battery powers the device. Optional one-line display screen could provide additional user interaction information or character suggestion.

Working Principal:

There is a 10DOF Inertial Measurement Sensor on-board to capture handwriting gesture from users while writing on any surface. The sensor data is transmitted to smartphone or PC wirelessly in real-time. The companion app/software then converts the gesture information into digital text format as input to any word-processing application or text-input system. The real trick behind the technology is a state-of-the-art deep learning algorithm for handwritten character classification from inertial measurement data with up-to 99.2% accuracy. Deep Convolutional Neural Network (CNN) makes it possible to recognize handwriting gestures on-board or with companion app from minimal end-user training data.

Usage Policy:

To be introduced as commercial product, the software should be optimized for minimum user effort for calibration. A user might buy the 'DigiPen' and write the phrase - 'A QUICK BROWN FOX JUMPS OVER THE LAZY DOG' in both capital and small letters to calibrate for his own writing style. Simple yet powerful!

Aside from handwriting recognition, the pen could also take various gesture commands as preset or calibrated. For example, a user might set an interaction like drawing a box after a word phrase will save it as title - and much more!

Video

Like this entry?

-

About the Entrant

- Name:Abu Anas Shuvom

- Type of entry:individual

- Software used for this entry:MATLAB/SIMULINK

- Patent status:pending