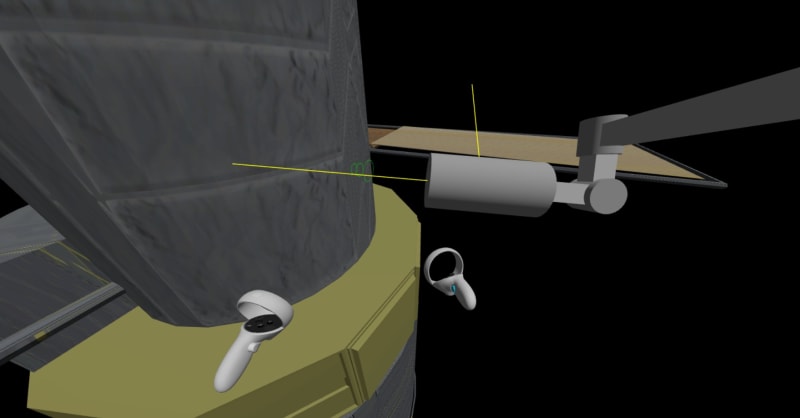

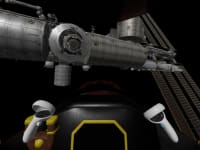

The robot arm on the ISS is controlled with two joysticks - one for translation and one for rotation. A helicopter pilot uses a joystick, collective lever, and foot pedals to control his aircraft. A hobby drone operator uses a remote control unit with two joysticks. Even the Space-X Dragon's 2D touchscreen essentially provides two joysticks.

All of these require significant effort for an operator to learn and use. But a 3-year old kid with a toy jet can do maneuvers that would make a Top Gun pilot jealous, and can land a toy helicopter with pinpoint accuracy. Anyone can easily position a multi-jointed desktop lamp exactly as needed, by simply grabbing it. We propose a control scheme using direct manipulation to operate robot arms, aircraft, drones, and spacecraft that requires minimal training and provides precise results.

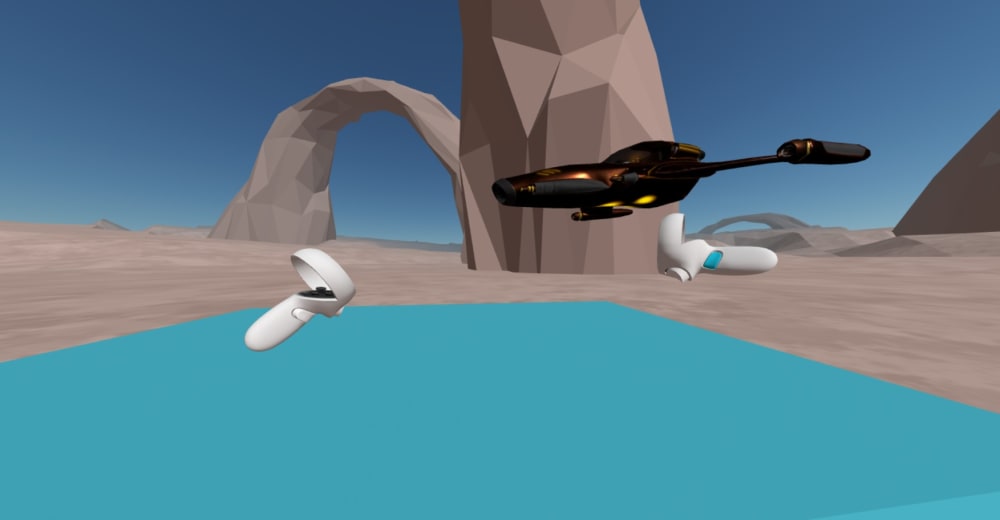

Virtual reality systems like the Meta Quest and HTV Vive include hand controllers, or even controller-free hand tracking, that provide real-time six-degree-of-freedom pose information using computer vision. That input can be used to control a vehicle or robot arm by virtually "grabbing" and posing it in a very natural way, by moving and rotating the hand. Hand motion relative to the hand's pose when starting the grab is applied to the vehicle or robot arm, using the avionics or control system to translate motion requests into appropriate thruster, control surface, or arm joint commands.

A direct hand interface does have limits on the range of motion - for example if a 360-degree roll on the forward axis is desired. Two natural options are available. In a "ratcheting" mode, the user releases control, resets their hand to a neutral or counter-adjusted pose, and re-grabs control to continue motion. In a "velocity" mode, the angle or distance from the grab pose applies a corresponding velocity to the object. The first mode is like using a manual screwdriver, while the second mode is like using a power screwdriver, or like typical joystick control. These two modes can be combined by using direct position or angle control for small inputs, maintaining precise and natural object control and allowing ratcheting when needed, but automatically switching to velocity control when deflection exceeds a threshold, to provide continuous wide-range motion. For primary forward motion of a vehicle, a separate throttle control can be useful (or a trigger button on the tracking hand controller).

The actual speed and range of motion of the vehicle or arm may be constrained by performance and safety limits. To the user, this feels like "inertia" in the object's response, but doesn't detract from the natural and direct sense of control.

The proposed scheme could be applied anywhere joysticks are currently used and 3D hand tracking is feasible, including video games, drones, aircraft, and spacecraft.

Video

Like this entry?

-

About the Entrant

- Name:Jack Morrison

- Type of entry:individual

- Software used for this entry:Prototype/demo uses A-Frame/Three.js web VR framework

- Patent status:none