It will be very difficult to communicate with aliens: we will have so little in common. We need practice; otherwise, first contact could go terribly wrong. It would be a good idea, then, for us to practice by learning to talk to a smart but disparate species here on Earth. Dolphins are an obvious choice.

Over the last two decades there have been great strides in understanding how the human brain works. We are now turning that new knowledge into products like RosettaStone (Figure 2), which works on a backdoor between the visual and language modules of our brains. What we need is a RosettaStone to teach humans dolphin language.

We do not know as much about the dolphin brain as we do about the human brain but we do know that the main sense organ of dolphins is their melon, not their eyes. The melon is a focused extension of their hearing and serves two functions: (1) communication and (2) echo location sonar. Dolphins see with sound.

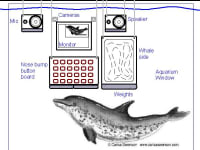

The challenge then is to get dolphins to teach a computer to speak dolphin using their melon as the key communication organ. Then we can get the computer to teach us to speak dolphin. This will require a purpose-designed dolphin computer workstation (Figure 1) with:

1. State-of-the-art Computer with enough power to process multiple high-frequency acoustic signals in real time.

2. Custom AI software with the ability to learn from the dolphins and apply information theory to sort out their sounds while doing a frequency down-shift to the human range for interactivity.

3. A large monitor behind the aquarium glass

4. Two digital cameras

5. Aquatic nose-bump button panel that lets dolphins input to the computer.

6. Aquatic stereo microphones so the dolphins can be heard.

7. Two aquatic stereo speakers so the computer can respond.

8. Side of a Whale -- A new design aquatic mechanical deformation panel with a surface that can be mechanically distorted by the computer to provide a varied echo signal. Behind a front neoprene sheet are an array of linear actuators that can distort the sheet into computer-controlled contours that can be scanned by the dolphin’s melon.

Dolphins love to play and they are extremely intelligent within their own context. They are curious and their communication sounds have the information signature of speech. To date most of our studies of their talk has been in our context unnatural to them. In this approach we give them a defined, yet open-ended task, working with the computer with input-output capabilities to match their inherent natural skills, not ours. We will let them do what they want with the computer and then ask the computer to tell us what they have said and done.

When the computer can accurately interpret the dolphin’s voice commands, it will be able to tell us what they said. Then we will have a foundation to build mutual communication.

Like this entry?

-

About the Entrant

- Name:Tom Riley

- Type of entry:individual

- Software used for this entry:AutoSketch

- Patent status:none