OVERVIEW

Aid visually or hearing impaired users via Augmented Glasses (AG), WiFi, and GPS. Used with mobile devices - smartphones and tablets - to identify buildings, called Locations with this capability. AG or mobile devices allow hands-free or touch pad control to the system. Operates in two modes, Hearing (user can hear) and Visual (user can see). Both modes identify points of interest, Destinations, such as: entrance, lobby, North entrance, emergency exit, restrooms (and handicapped stalls within), water fountains, stairs, elevators, floors, halls, conference rooms, major office numbers, and keep out zones (KOZs - construction, wet floors, etc). Locations mount active RFID tags that interact with WiFi at Destinations.

OPERATION

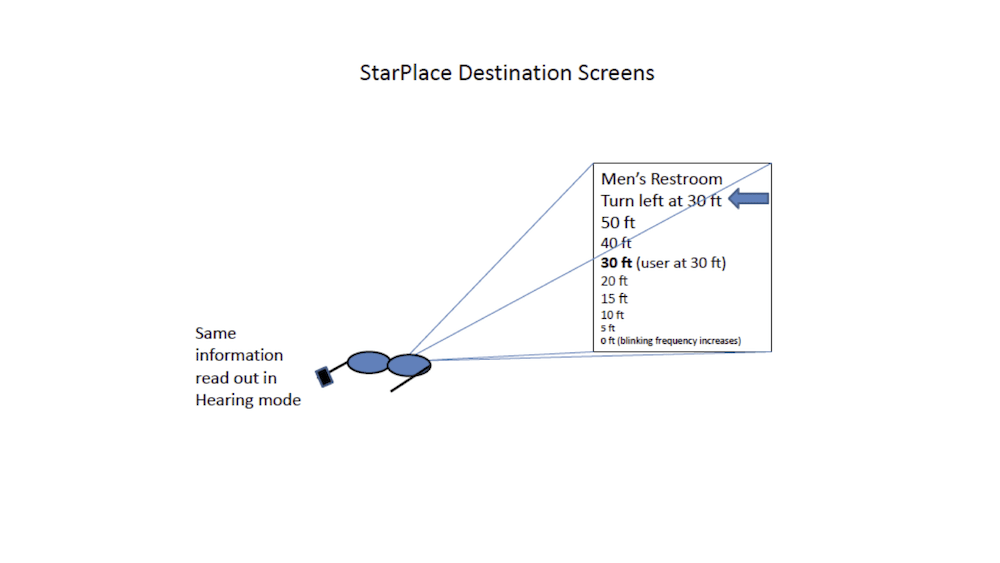

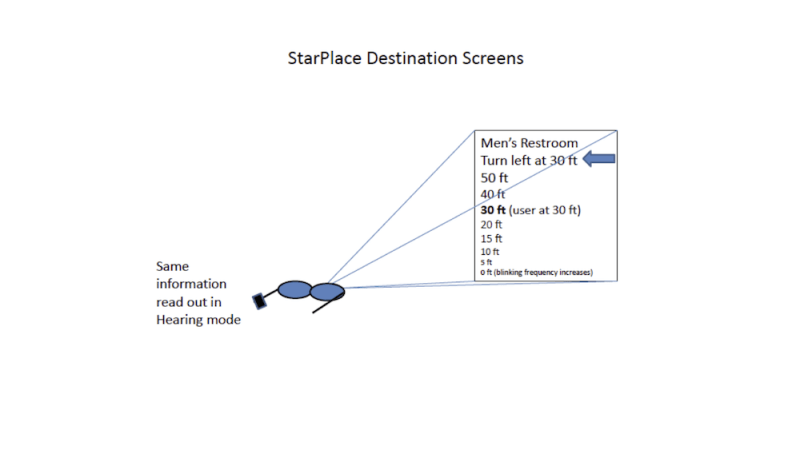

For both modes, a Pointer gives directions via earpiece and on screen, triangulating the orientation of the user referenced to North and basing a change in direction via AG or mobile device accelerometers already incorporated. Access to specific Destination is via taps on the AG or touch on computing device to scroll through Destinations; Each Destination would enunciate itself, for example, “Men’s Restroom – twenty feet, fifteen feet, etc,” a voice countdown for Hearing mode and visual countdown for Visual mode. Either mode could be enabled to automatically enunciate all Destinations, or groups such as restrooms, emergency exits, etc, or to exclude some.

Location set up includes input of unique hall and room numbers, identification of the North entrance, and maintenance of KOZs. For construction, the System Location inputs GPS coordinates. For wet floors, a WiFi-transmitter mounted on a Wet floor sign broadcasts a simple verbal message, “Caution – Wet Floor” and the AG or mobile device blinks the display over a narrow area. This system would not give specific locations of individuals or some areas would not be labeled as Destinations for security and logistics considerations, though enabling such data could be a system preference.

A universal convention for Locations adapts to all Locations everywhere, especially major Destinations, such as lobbies, entrances, restrooms and KOZs. Many large public buildings would upload internal maps to allow users to plan a visit to these Locations in advance; these would be accessed on the web or via QR code outside the building on the walkways leading up to each building. For Hearing mode, the system guides a user to a QR code. The AG or mobile device arranges to display the result and can read off Destinations.

User may select either active mode as above or passive, where an RFID interrogator on the mobile device reads RFID tags and sends voice direction to the earpiece or enables map displays.

All Locations should use a universal visual logo to flash on the AG or mobile device with a unique tone sequence that sounds when the user nears a Location.

IMPLEMENTATION

Plans are to implement this system at NASA Johnson Space Center for its central campus in late 2015. Users will download the software to tablets and smartphones to read QR codes and other software for the system.

Like this entry?

-

About the Entrant

- Name:Kevin Moore, Pe

- Type of entry:individual

- Patent status:none