In the era of Big Data and Artificial Intelligence, large scale associative arrays will provide a new paradigm for creation and implementation of algorithms to better solve searching and pattern matching problems.

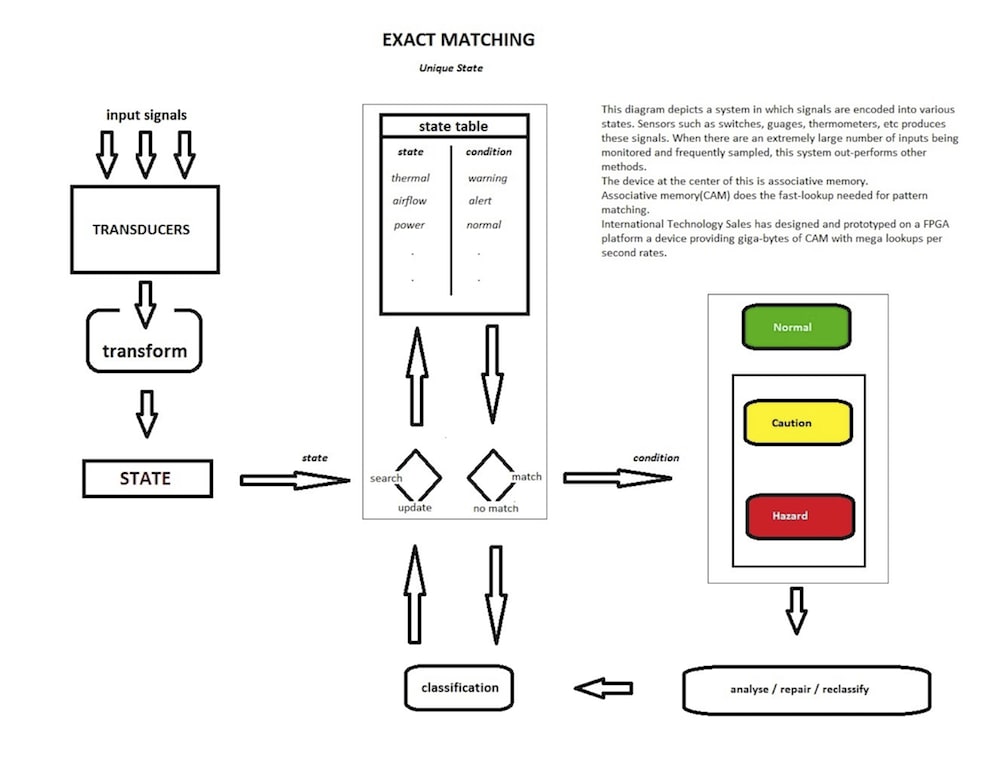

The product prototype developed is a content addressable /associative memory computing device.

A) It exceeds the capabilities of currently available content addressable memory / associative memory devices.

1) Variable feature vector / key size.

2) The current prototype set up parameter is for a 128 bit wide feature vector / key

3) The current prototype searchable on board data is 128 bits by 512 megabytes deep.

B) Depending on silicon used for implementation (FPGA, PLD or ASIC) the feature vector / key size can expand to any bit size based on the available silicon type and size that is available to implement the design.

C) Available search data width and depth is dependent on the final hardware design.

A proof of concept current prototype show that the feature vector / key length is only restricted by the hardware(Silicon) used and the size of the data cache to search against has no restriction other than the silicon used in the design.

Simulation shows the design can run at clock speeds of 4 GHz if implemented using ASIC or custom designed silicon.

Current clock speeds are limited to the development board device speed and available board clock speeds.

The current prototype operates at one million look ups per second.

Potential customers:

Companies with requirements for increased performance in:

Data Mining, Pattern Recognition (speech, writing, picture, facial), Protein and Genetic Research, Network Routers and Security Appliances.

Essentially any requirement to use a feature vector or key to search against data would use the device. An example is the process to search a URL and return an IP address.

The development can be thought of as a specialized computing device used to transform specific software tasks into silicon chips and / or other hardware instead of depending on a CPU to perform the task.

Like this entry?

-

About the Entrant

- Name:Clarence Fleming

- Type of entry:teamTeam members:Clarence Fleming Dempster Welling

- Software used for this entry:Quartus, ModelSim, HSpice & Electric

- Patent status:none