This idea came from the analysis of why symmetric-multi-processing (SMP) architectures failed to perform well when given an intrinsically parallel software task (circuit simulation in Verilog) - despite being able to get as many as 48 CPUs on a chip these days, simulation acceleration usually tops out at about 4x on 8 cores. The two problems with SMP are data-bus congestion and missing cache, and the idea with "wandering threads" (WT) is to alleviate that by enabling more efficient use of CPU caches, and taking traffic off the data bus, by moving threads instead of data.

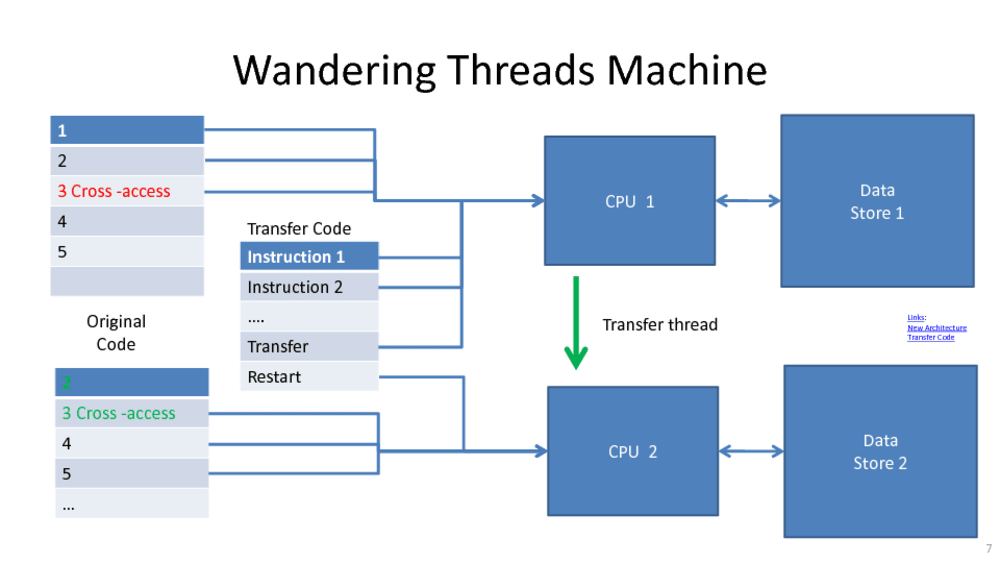

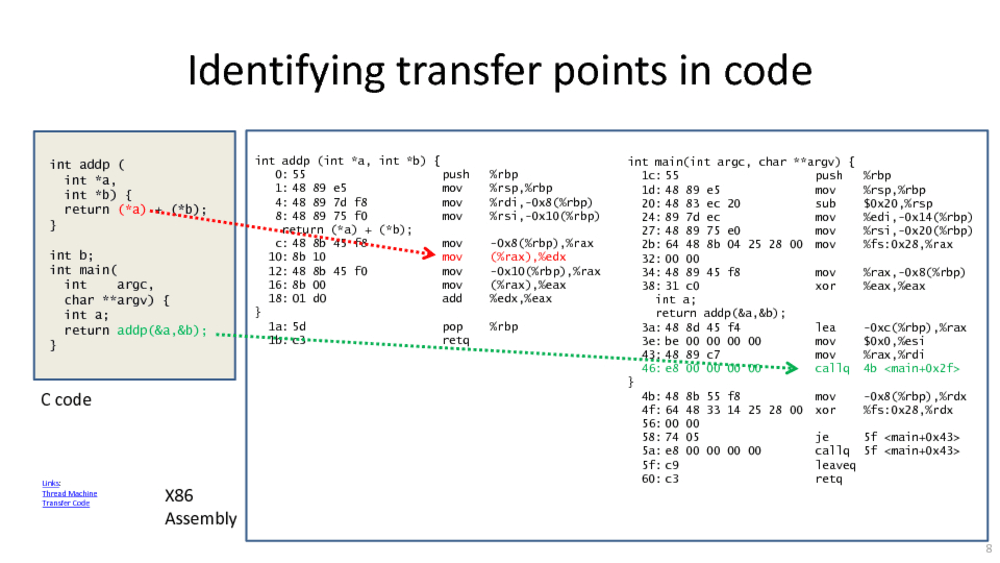

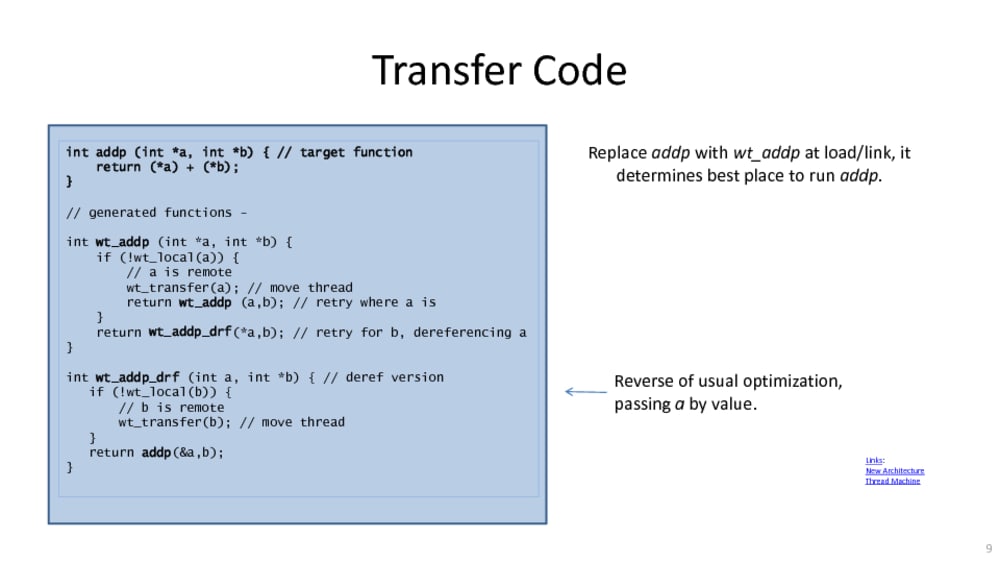

The main technique used is to ask at routine call boundaries whether a thread should continue operating on its current CPU or transfer the work to another CPU which is bound more closely to the memory holding the data being operated on. Moving a thread at such a boundary involves less work than requesting data, which is usually at least 8 words (a cache-line) across the cache-coherent data bus and involves a request and return through all cache layers, whereas the thread move is often just a few words, only goes in one direction, and can go directly from one CPU/cache to another CPU/cache. Since thread moves are independent, the CPU/CPU communication can be allocated more channels than a common data bus, and adding more CPUs (with caches) and more channels reduces the chance of thread collision, whereas the cache-coherency requirements of a common data bus make it less efficient as CPUs are added.

The processing patterns of circuit simulation are similar to that of neural networks, so this invention enables regular CPU hardware (X86, ARM, MIPS, RISC-V etc.) to handle AI tasks as well as regular (legacy) code efficiently. Similarly, software which handles large amounts of static data (like databases) should benefit.

A side effect of moving threads rather than data is that in many cases a common data bus is not required between CPUs, and that greatly improves security. The speculative code execution techniques that led to the Spectre/Meltdown issues with X86 can still be used in a WT machine because it has no physical paths to exploit. Similarly, without the shared data bus, code can reach over larger physical spans, enabling programmers to view large distributed machines the same way the would look at (say) a PC - i.e. it greatly simplifies Cloud-to-Edge computing.

In conclusion, this modification of traditional CPU designs enables greater performance at lower power with better security, and does so without requiring major code rewrites or a massive shift in computing paradigm - "virtualized SMP" allows programmers to continue with current practices for many years to come.

Video

-

Awards

-

2018 Top 100 Entries

2018 Top 100 Entries

Like this entry?

-

About the Entrant

- Name:Kevin Cameron

- Type of entry:individual

- Patent status:patented