The perception of our immediate physical environment plays a key role in the human experience. Awareness of our surroundings can be considered a primary survival sense second only to hearing and sight and, in many ways, opens a doorway to be human.

The advent of the cochlea implant has greatly assisted the hearing impaired with the task of perceiving acoustics, however, little has been forthcoming for the visually impaired. When sight is removed the perception of one’s environment shrinks and, in many ways, leads to isolation and a disconnect from the fundamental right of being human.

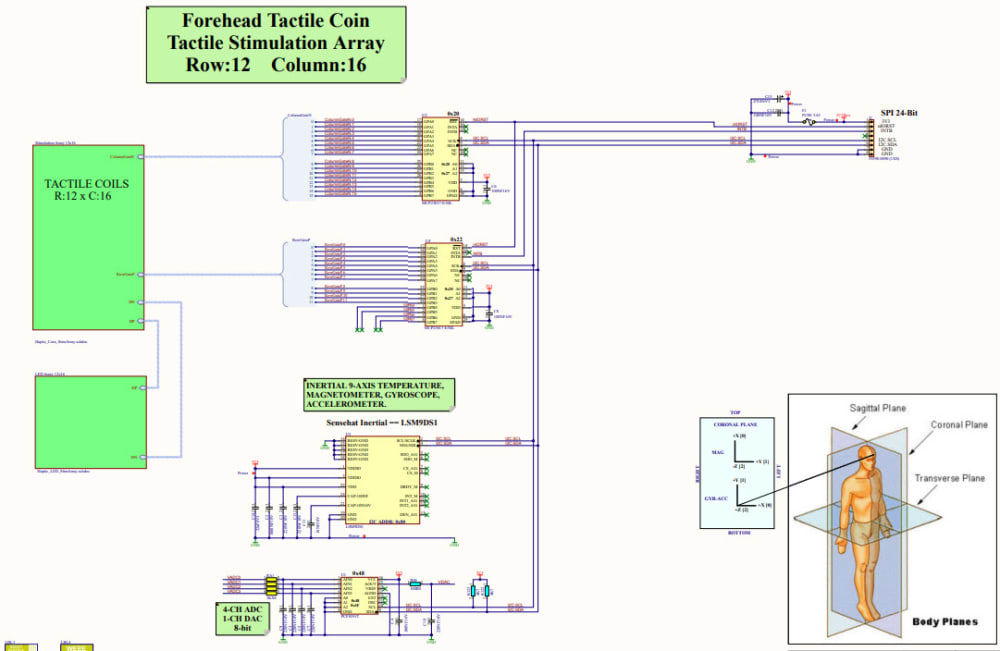

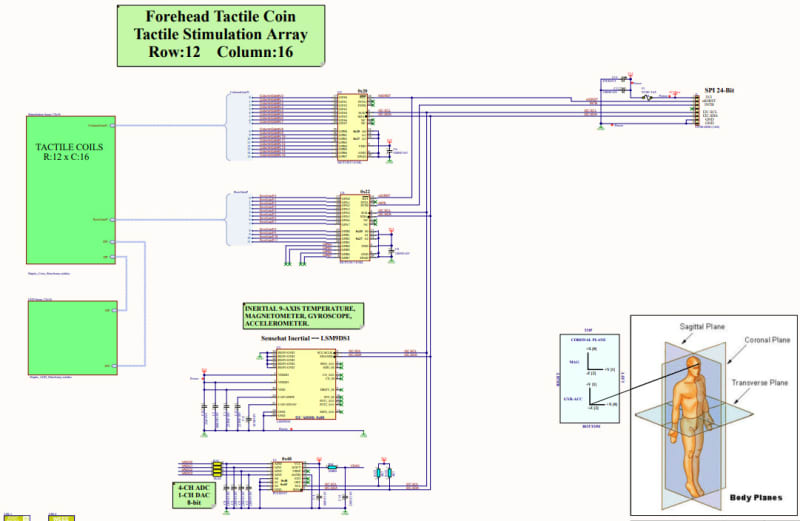

With the availability of low-cost Time-Of-Flight 3D imaging, the ability to encode a 3D visual environment into an array of forehead haptic vibrations allows one to perceive externalized objects from a forehead mounted array in real time. The assertion of intensity and vibration signatures derived directly from a forehead mounted ToF 3D visual sensor provides a simple means of evaluating environmental constructs and navigating them safely.

Haptic encoding of spatial markers, derived from visual spatial data, offers a means of assisting in the task of navigating without sight and has immediate commercial and humanitarian potential for the 42 million blind that are distributed worldwide. It is hoped that this paper shall stimulate interest in the development of a haptic vibration 3D ToF vision encoder.

3D ToF decoding of head based ToF cameras allows a secondary (disparity) means of extracting visual spatial targets from real-time 3D ToF analysis albeit the availability of ToF sensor arrays using Hadamard filtering offers the simple, reliable and straight forward means of obtaining 3D environmental data. The artificial generation of virtual vibration sources spatially encoded to visual targets allow visual environmental information to be perceived by anyone.

It is proposed that the position of visual targets be encoded onto a forehead mounted haptic array of at least 12 high x 24 wide, thereby offering approximately a graduality of 288. The expected instantaneous view field from a typical ToF sensor being in the order of 45deg elevation and an azimuth in the order of 60deg.

The ability to perceive visual targets in near real time is expected to greatly assist in the ability to navigate within the immersed visual environment that is currently closed to the visually impaired.

For the visually impaired, the inability to perceive the nearby environment in many ways shrinks the world in which they live to the perception of sounds and through the extension of touch or white stick.

The combination of a forward-facing head mounted ToF camera, combined with an array of haptic transducers, offers an alternative means of perceiving objects within the immediate environment and offers a means of extending their perceptual world by at least 5 meters.

Video

Like this entry?

-

About the Entrant

- Name:Clive Boyd

- Type of entry:teamTeam members:Clive Boyd

David Ng - Software used for this entry:C++ Linux

- Patent status:none